Nvidia is using deceptive practices and abusing its market dominance to quash the competition, according to Cerebras Systems CEO Andrew Feldman, after the firm unexpectedly announced its latest GPU product roadmap in October 2023.

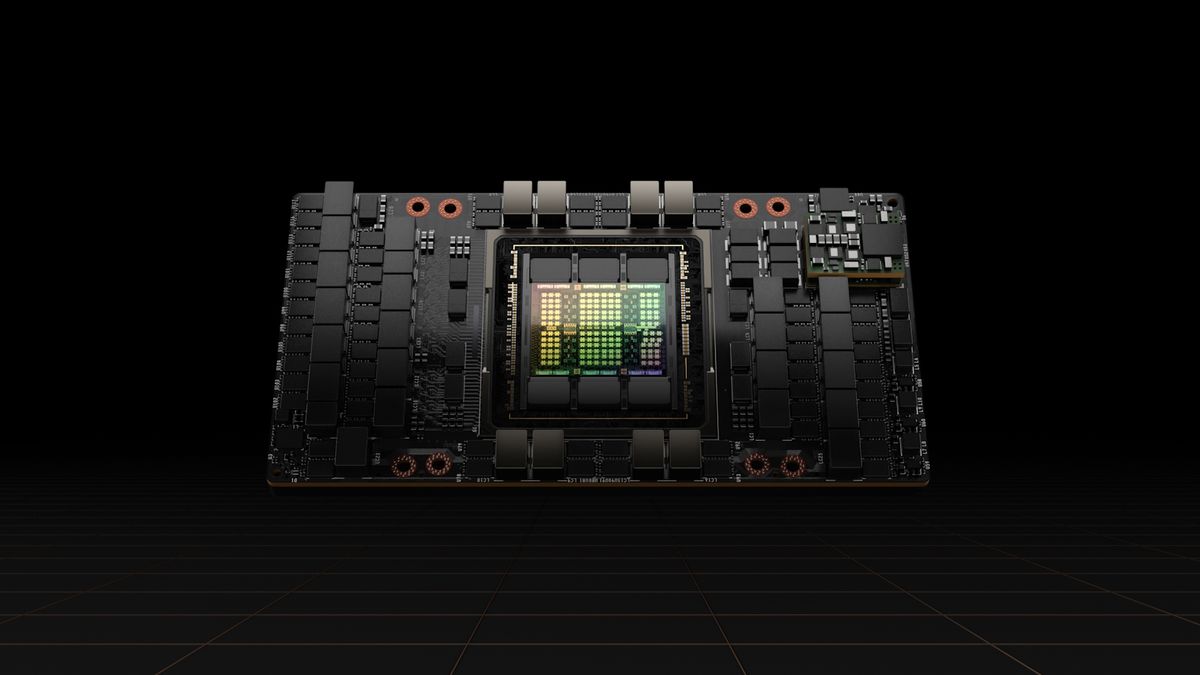

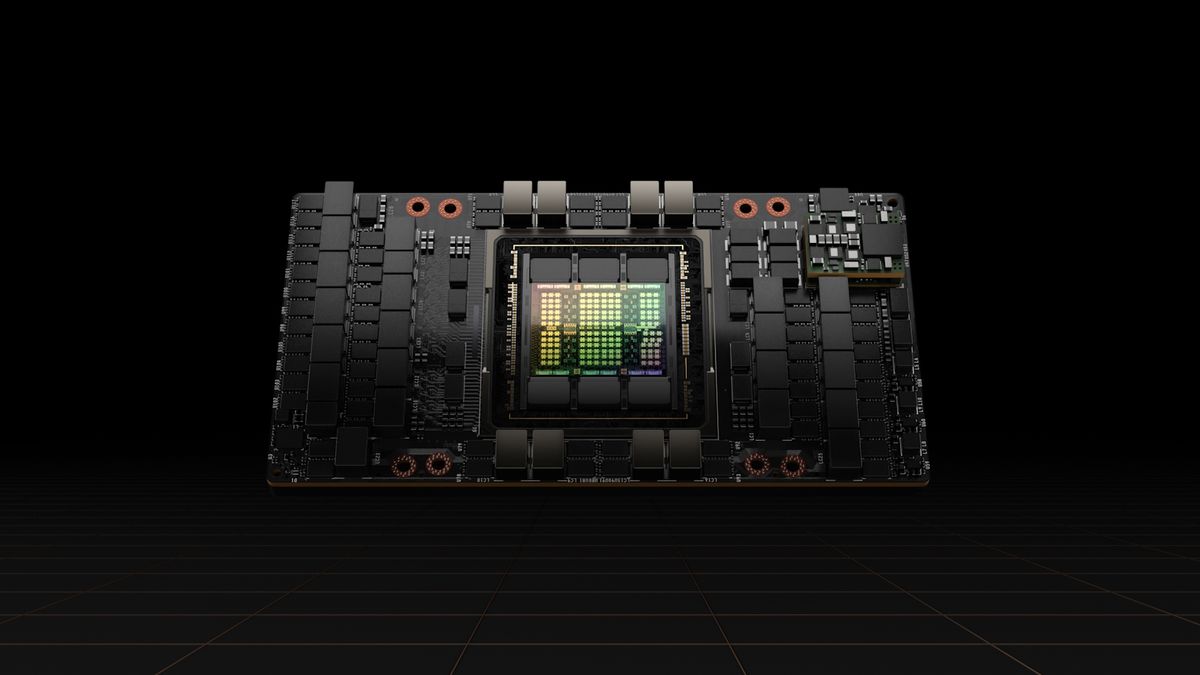

Nvidia outlined new graphics cards set for annual release between 2024 and 2026 to add to the industry leading A100 and H100 GPUs currently in such high demand, with organizations across the industry sphere swallowing them up for generative AI workloads.

But Feldman labelled this news a “predetary pre-announcement” speaking to HPCWire, highlighting the firm has no obligation to see through on releasing any of the components it’s teased. By doing this, he’s speculated it’s only confused the market, especially in light of the fact Nvidia was, say, a year late with the H100 GPU. And he doubts Nvidia can see through on this strategy, nor might it want to.

Nvidia is just ‘throwing sand up in the air’

Nvidia teased yearly leaps on a single architecture in its announcement, with the Hopper Next following the Hpper GPU in 2024, followed by the Ada Lovelace-Next GPU, a successor to the Ada Lovelace graphics card, set for release in 2025.

“Companies have been making chips for a long time, and nobody has ever been able to succeed on a one-year cadence because the fabs do not change at a one-year pace, Feldman countered to HPCWire.

“In many ways, it has been a terrible block of time for Nvidia. Stability AI said they were going to go on Intel. Amazon said the Anthropic was going to run on them. We announced a monstrous deal that would produce enough compute so it would be clear that you could build… large clusters with us.

“[Nvidia’s] response, not surprising to me, in the strategy realm, is not a better product. It’s… throw sand up in the air and move your hands a lot. And you know, Nvidia was a year late with the H100.”

Feldman has designed the world’s largest AI chip in the world, the Cerebras Wafer-Scale Engine 2 CPU – which is 46,226 square-mm and contains 2.6 trillion transistors across 850,000 cores.

He told the New Yorker that massive chips are better than smaller ones because cores communicate faster when they’re on the same chip rather than being scattered across a server room.

More from TechRadar Pro

Services Marketplace – Listings, Bookings & Reviews