Radar, a nearly century-old technology, is turning out to be far more useful in modern computing and electronics than anyone might have thought.

It’s being added to light bulbs to help detect if someone has fallen and they can’t get up, and was introduced in Google’s Pixel 4 Android phone a few years ago to enable no-touch, gesture-based activities.

Now, however, Google ATAP research is getting more aggressive and using radio detection and ranging technology to detect people, their actions, orientation, position relative to the sensors, and, most importantly, the meaning of that movement and interaction.

A new mesh

On Tuesday, Google’s ATAP group, which is responsible for research into wearable technology and the Soli Radar in the Pixel 4, unveiled its latest Soli Radar research project, which focuses on non-verbal interactions with the radar system.

Outlined in a video on YouTube, the researchers explain how they use a mesh network of Soli Radars, which can be embedded in devices like Google Nest, to detect people as they move around and near the devices.

The “ambient, socially aware devices,” use Soli Radars and machine and deep learning to build an understanding of what our actions mean. Everything from a wave of a hand, turn of the head, distance from devices, speed of us moving past them, and even the orientation of our bodies in relation to the devices tells the system something about our intent.

“We’re inspired by how people interact with one another. As humans, we understand each other intuitively, without saying a single word. We pick up on social cues, subtle gestures that we innately understand and react to,” says Leonardo Giusti, Google ATAP Head of Design, in the video.

The clear goal is to have computers understand us as if, well, they were a little more human.

Radar sensors help the devices (along with that AI) to understand the social context around them and then act accordingly.

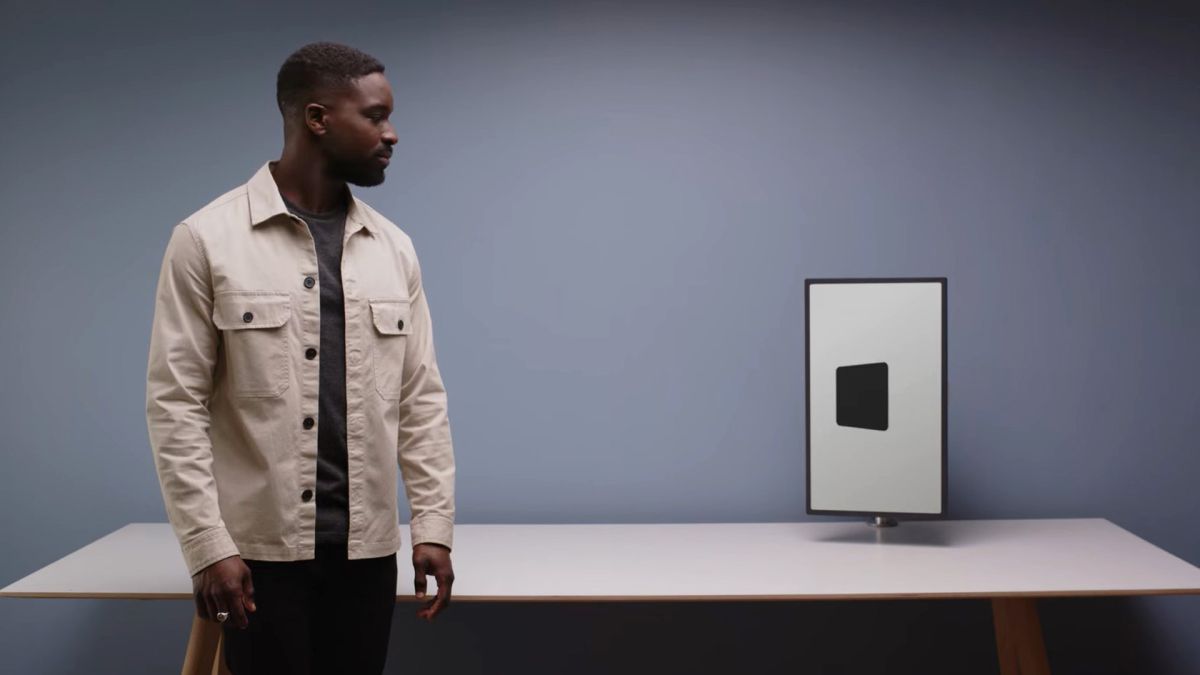

In the video, they showed opaque screens noticing when people glanced at them. They could tell the difference between someone who was intentionally giving full attention to the screen and someone who was simply glancing as they walked by. In one instance, a small white screen quickly showed off rain and an umbrella to alert someone leaving that, maybe, they should grab an umbrella.

It was somewhat entertaining to hear the researchers describe the vicinity of a computer as “its personal space.” Even so, the idea is clear, a Soli Radar-equipped computer might respond to approaching a person more like another person as opposed to just a computer. Instead of waiting for you to touch the keyboard to turn it on, it might sense your approach and boot up.

“Approach” is actually one of the team’s new interaction primitives. The others are “leave,” “glance,” and “pass.” The device’s actions will differ depending on which movement primitive it detects.

“Our technique uses advanced algorithms, including deep learning, to understand the nuance of people’s subtle body language,” said Eiji Hayashi, Google ATAP Human Interactive Lead in the video.

Those nuances include head orientation to where attention is focused, but it could also mean the Soli Radar system might know when you cock your head to indicate, “I don’t understand.”

One benefit of using radar as opposed to optical sensors for intent detection is that Radars don’t “see” anything and they do not collect images. They just use radio waves to build a mesh of motion, movement, and position. It’s up to the software to make sense of it.

Even though some radar technology is already in consumer electronic devices, Google’s ATAP Soli Radar project is nowhere near productization. Maybe that’s why a radar-enabled future sounds so very 23rd century.

“These devices are designed to cater to us from the background in a quiet, respectful way,” said Timi Oyedeji, Google ATAP Interaction Designer in the video, “You can get these gentle prompts, and have interactions that are helpful, but not obtrusive.”