/cdn.vox-cdn.com/uploads/chorus_asset/file/25385428/Instagram_nude_blurring_hero.jpg)

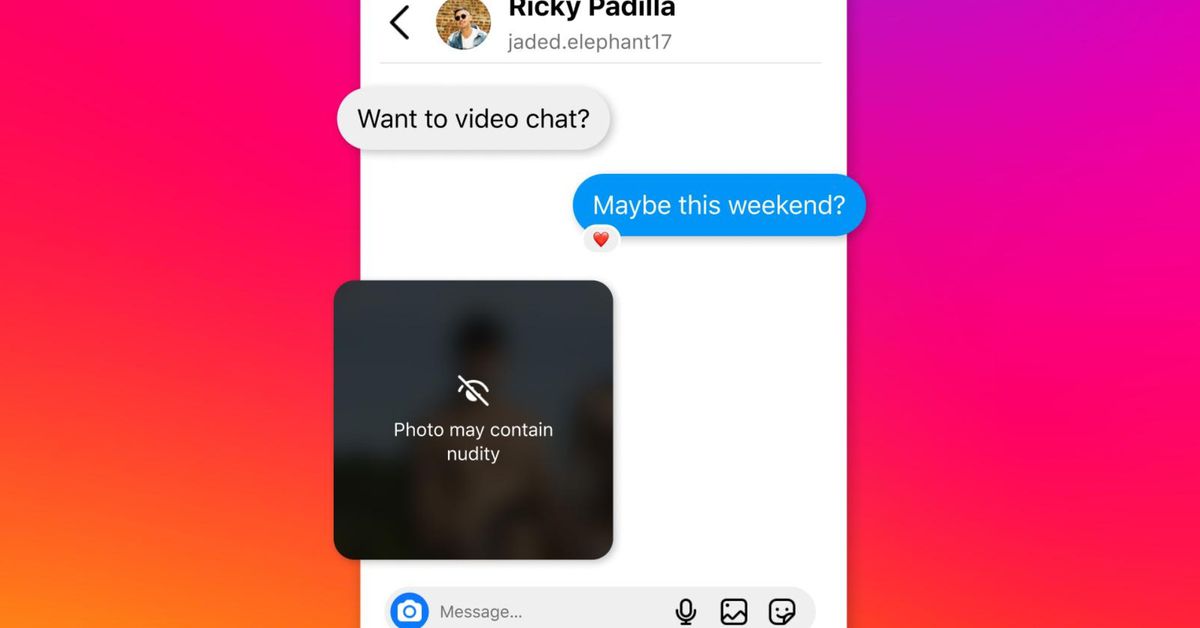

Instagram is preparing to roll out a new safety feature that blurs nude images in messages, as part of efforts to protect minors on the platform from abuse and sexually exploitative scams.

Announced by Meta on Thursday, the new feature — which both blurs images detected to contain nudity and discourages users from sending them — will be enabled by default for teenage Instagram users, as identified by the birthday information on their account. A notification will also encourage adult users to turn it on.

The new feature will be tested in the coming weeks according to the Wall Street Journal, with a global rollout expected over the next few months. Meta says the feature uses on-device machine learning to analyze whether an image sent via Instagram’s direct messaging service contains nudity, and that the company won’t have access to these images unless they’ve been reported.

When the protection is enabled, Instagram users who receive nude photographs will be presented with a message telling them not to feel pressured to respond, alongside options to block and report the sender. “This feature is designed not only to protect people from seeing unwanted nudity in their DMs, but also to protect them from scammers who may send nude images to trick people into sending their own images in return,” Meta said in its announcement.

Users who try to DM a nude will also see a message warning them about the dangers of sharing sensitive photos, while another warning message will discourage users who attempt to forward a nude image they’ve received.

This is the latest of Meta’s attempts to bolster protections for children on its platforms, having backed a tool for taking sexually explicit images of minors offline in February 2023 and restricting their access to harmful topics like suicide, self-harm, and eating disorders earlier this year.

Services Marketplace – Listings, Bookings & Reviews