Nvidia has unveiled its new Grace CPU Superchip designed for AI infrastructure and high performance computing at GTC 2022.

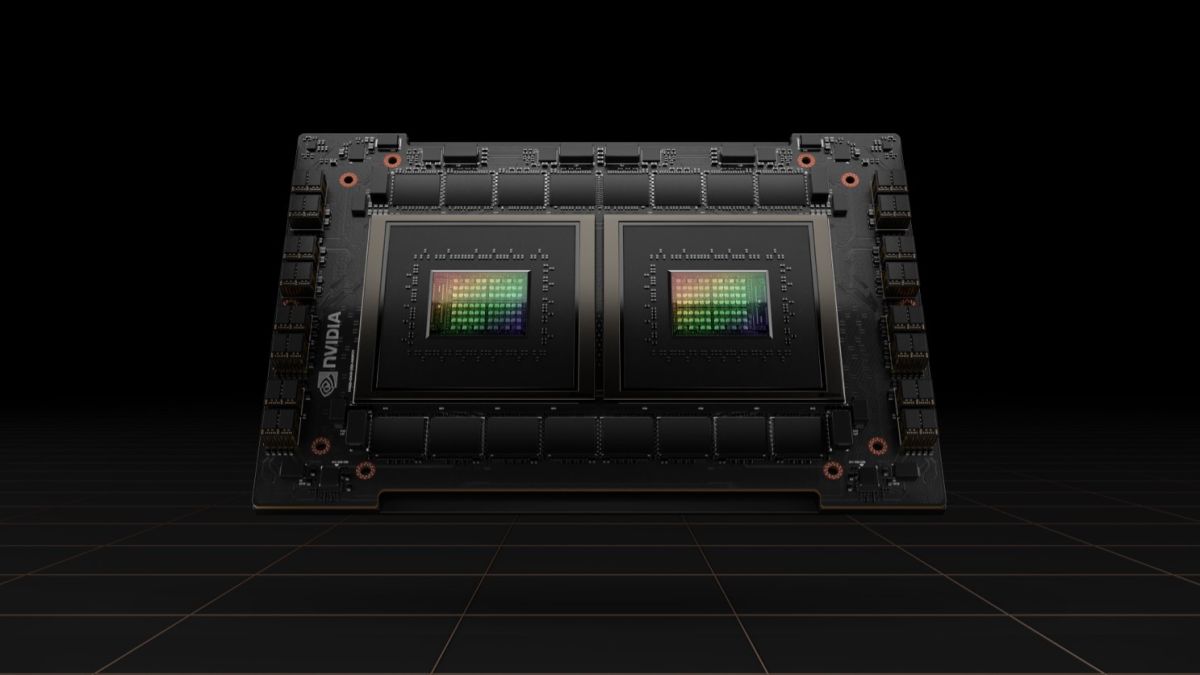

The new 144-core Arm Neoverse-based discrete data center CPU is actually comprised of two CPU chips connected over the company’s high-speed, low-latency, chip-to-chip (C2C) interconnect, NVLink.

Nvidia’s Grace CPU Superchip complements its Grace Hopper Superchip which was announced last year and features one CPU and one GPU, on one carrier board. While their makeup may be different, both superchips share the same underlying CPU architecture as well as NVLink-CNC interconnect.

Founder and CEO of Nvidia, Jensen Huang explained in a press release how the Grace CPU Superchip is ideal for AI workloads, saying:

“A new type of data center has emerged — AI factories that process and refine mountains of data to produce intelligence. The Grace CPU Superchip offers the highest performance, memory bandwidth and NVIDIA software platforms in one chip and will shine as the CPU of the world’s AI infrastructure.”

Nvidia H100 and DGX H100

In an effort to power the next wave of AI data centers, Nvidia also announced its next-generation accelerated computing platform with Nvidia Hoppper architecture which succeeds the company’s Nvidia Ampere architecture that launched two years ago.

The chipmaker even announced its first Hopper-based GPU which is packed with 80bn transistors. The Nvidia H100 is the world’s largest and most powerful accelerator to date and like the Grace CPU Superchip, it also features NVLink interconnect for advancing gigantic AI language models, deep recommender systems, genomics and complex digital twins.

For enterprise businesses that want even more power, Nvidia’s DGX H100 (its fourth-generation DGX system) features eight H100 GPUs and can deliver 32 petaflops of AI performance at new FP8 precision. This provides the scale to meet the massive compute requirements of large language models, recommender systems, healthcare research and climate science. It’s worth noting that all of the GPUs in Nvidia’s DGX H100 systems are connected by NVLink to provide 900GB/s connectivity.

The company’s new Hopper architecture has already received broad industry support from leading cloud computing providers and Alibaba Cloud, AWS, Baidu AI Cloud, Google Cloud, Microsoft Azure, Oracle Cloud and Tencent Cloud all plan to offer H100-based instances. At the same time, Cisco, Dell Technologies, HPE, Inspur, Lenovo and other system manufacturers are planning to release servers with H100 accelerators.

Nvidia’s H100 GPU is expected to be available worldwide later this year from cloud service providers, computer makers and directly from the company itself.